To some seasoned developers, test-driven development (TDD) can initially seem like the dumbest thing ever. Once you’ve written a failing test, you are supposed to write only as much code as needed to make the test to pass. No speculation about what you think you need in the future–a week from now, an hour from now, or even ten minutes from now. Per Bob Martin’s three laws of TDD, write no more production code than “sufficient to pass the one failing unit test.”

“But I know I will want a hashmap in the next test or two, because I’ll have a bunch of keys and values…”

You’re a seasoned developer, so you’re probably right most of the time when you say you’ll need it. And yes, if following TDD, for now you must provide a simpler implementation. “That seems stupid; providing the simpler solution now means that I’ll be reworking it later to create the right one.”

Tradeoffs: Code-Test-Fix vs. Test-Code/Fix

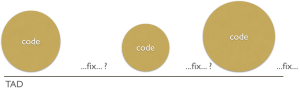

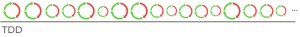

As with most things in computing, TDD is a tradeoff. It trades off your current way of working–likely a code-test-fix cycle–with a test-code/fix cycle.

In a code-test-fix cycle, you write what you think the proper code is, you design and run tests (whether they are manual or automated), and you fix any problems that you discover. The duration of each step (code, test, and fix) usually varies dramatically, anywhere from minutes to hours (and sometimes longer). The tests you write usually cover a large subset of the behaviors in the code you just wrote. A perfect developer ends up with no fix cycle segment in code-test-fix (aka test-after development or TAD).

In a test-code/fix cycle, you define completion criteria for the code to be written in the form of automated tests. You write the code you think meets the behavior demonstrated in the tests, and fix any attempts that do not make the tests pass. You also clean the code. The duration of each step (test, code/fix, and refactor, also known as red-green-refactor in TDD) is fairly consistent and very short–ideally no more than 5 minutes. A perfect developer ends up writing code forthwith that passes the test.

A key distinction of test-code/fix, then, is that the test you write determines the scope of the code to write. Your goal is to code only logic for the behavior within the scope of this test. If your code is insufficient, the tests do not pass.

Any more code than specified by the tests falls outside the scope that they define. You can choose to write additional tests to describe (and vet) these “excess” behaviors, but you are now out of the TDD rhythm: Such tests will pass when you execute them.

You can of course choose not to write additional tests, in which case some unknown amount of the excess behaviors will be untested. It is a choice, but at this point you are no longer doing TDD, by definition.

What Behaviors Did You Add?

So what if you’re not doing TDD? So what if you’re not testing everything? Breaking the TDD rhythm (by writing code in excess of the tests defined) carries the same ramifications as doing TAD in general, ones that you’ve already learned to accept as a seasoned developer.

Confidence in code correctness is but one reason to practice TDD, however. The tests TDD creates can also document the voluminous choices you make as a developer. When you choose to add behaviors without providing tests, you encode this behavior in way that is often not easily decoded: It can take a long time to uncover intent in the midst of any volume of code.

Well-designed tests can immediately impart the choices you make about the behaviors you designed into the code. They act as trustworthy documentation on the intended capabilities of the system.

Code Clarity

Even with well-designed tests that document choices made in the code, you can produce code that resists easy comprehension. You’re not likely a perfect writer: When you first write anything (an article, an email, a blog post, a tweet, etc.), you often bloat it with unnecessary words, or create text that’s difficult to decipher. Good writing is a process of getting your thoughts down, then returning to edit these thoughts for clarity.

And so it is with code. Even if you excel at writing the correct (test-passing) code out of the gate, chances are good that it’s a little or even a lot messy. Perhaps it is code that others find difficult to understand. Perhaps it duplicates other concepts already in your system. Perhaps it violates your team standards. Perhaps it could be written more concisely (maybe using a construct you’re vaguely aware of, but you wanted to get the code working first). Perhaps you realize a slightly-better name for the variable you chose, particularly once you re-read the code to yourself.

Getting ideas down in some form, then cleaning them up, is how most of us do and should work. The realization of prose on paper, or code in an editor, makes it easier for you (and others) to see the messiness in all its glory. Once it’s in our face, we know that we should clean it up.

TDD builds this editing process into the cycle. Once you produce code that works, you can immediately and safely shape it into something that will help decrease the cost of its maintenance.

Back to test-after: Adding untested code reduces your confidence about making changes to that code. Consciously or otherwise, you will similarly reduce the amount of code editing you do.

Is Speculative Generality Excess Code?

Suppose you’re tasked with building a stock portfolio. Along with supporting the ability to purchase shares of symbols (e.g. AAPL or IBM), the portfolio should answer the number of distinct symbols.

You’re an experienced developer. “I’m going to create this hashmap now to capture the number of shares purchased for each stock symbol, because that’s the solution I’m going to end up with.”

If the only test you’ve written so far is around purchasing shares for a given stock symbol, the following potential tests pass as soon as you run them–if you even think to write them:

-

increases the number of distinct symbols on the purchase of new stock symbol

-

does not increase the number of distinct symbols when a purchase is made for a symbol already purchased

The immediately-passing tests put you out of the realm of TDD. So yes, to answer this section’s titular question, your speculative hashmap represents excess code.

The Cost of Unrealized Speculation

As suggested earlier, most of the time you’re probably right about the generalities you think you need. When you’re right, it may seem like a waste of time to incrementally rework code (by starting with a specific solution and generalizing it a bit with each test). Still, the incremental solution keeps you on a rhythm, creates documentation for all intents in the system, prevents you from injecting defects, and allows you to keep the code clean incrementally.

Every once in a while, however, you aren’t right about the generalities needed. In the cases where you aren’t right, the incorrect and unneeded generality will cost you in the interim: The additional, unnecessary complexity can increase the effort required to read and maintain the code, over and over again across the lifetime of the system. (It can also raise questions about “why” and intent that can be hard to answer.) And when it comes time to support new behavior, it will usually take longer with an overly complex implementation than the simplest possible one.

You might view the incremental path that TDD promotes as a means of exploration. Seeking a simpler solution might open your mind up to other possibilities–things that you might not dream up if you race to the more comfortable, habitual solution that seems like it’s a foregone conclusion.

For the stock portfolio, a hashmap might seem like the proper projection, but it turns out that using a time series is better suited to historical data and can also result in simpler code.

More Up-Front Design?

It’s possible you’re claiming foul right about now: “If I had all the requirements up front for the portfolio, particularly ones around tracking purchase history and auditing, I might have come up with the best possible design.” Maybe. It’s also possible that your predisposition to certain kinds of solutions might have led you to a design that boxed you in to a constrained and inflexible solution.

A key goal of agile software development is to support and embrace change. With each iteration, a product owner can introduce new features–things never previously conceived. These interests can come about as a result of feedback from a number of events, including changes in the marketplace, changes to what a specific customer seeks, technology advances, and education regarding better techniques.

For example, no major U.S. airline carrier had supported baggage fees before 2008; it’s likely that few airlines had ever imagined them. When American Airlines introduced baggage fees in May of that year, the other carriers scrambled to incorporate a feature that their systems likely didn’t support so easily.

TDD: A Microcosm of Agile Iterations

The TDD cycle in a sense is a microcosm of a well-executed agile process:

-

Define what you want to do in the form of a “specification by example.”

-

Deliver something that meets that specification.

-

Repeat, incrementally iterating and building on what’s working so far.

Both TDD and agile iterative development are feedback-driven: A key goal for each is the ability to change directions easily if new information demands it.

In agile, you don’t build support for features that the product owner doesn’t ask for. Similarly, to succeed with TDD, you must adhere to and trust a core rule of TDD: Once you’ve watched a test fail, you may write only the code necessary to make the tests pass.